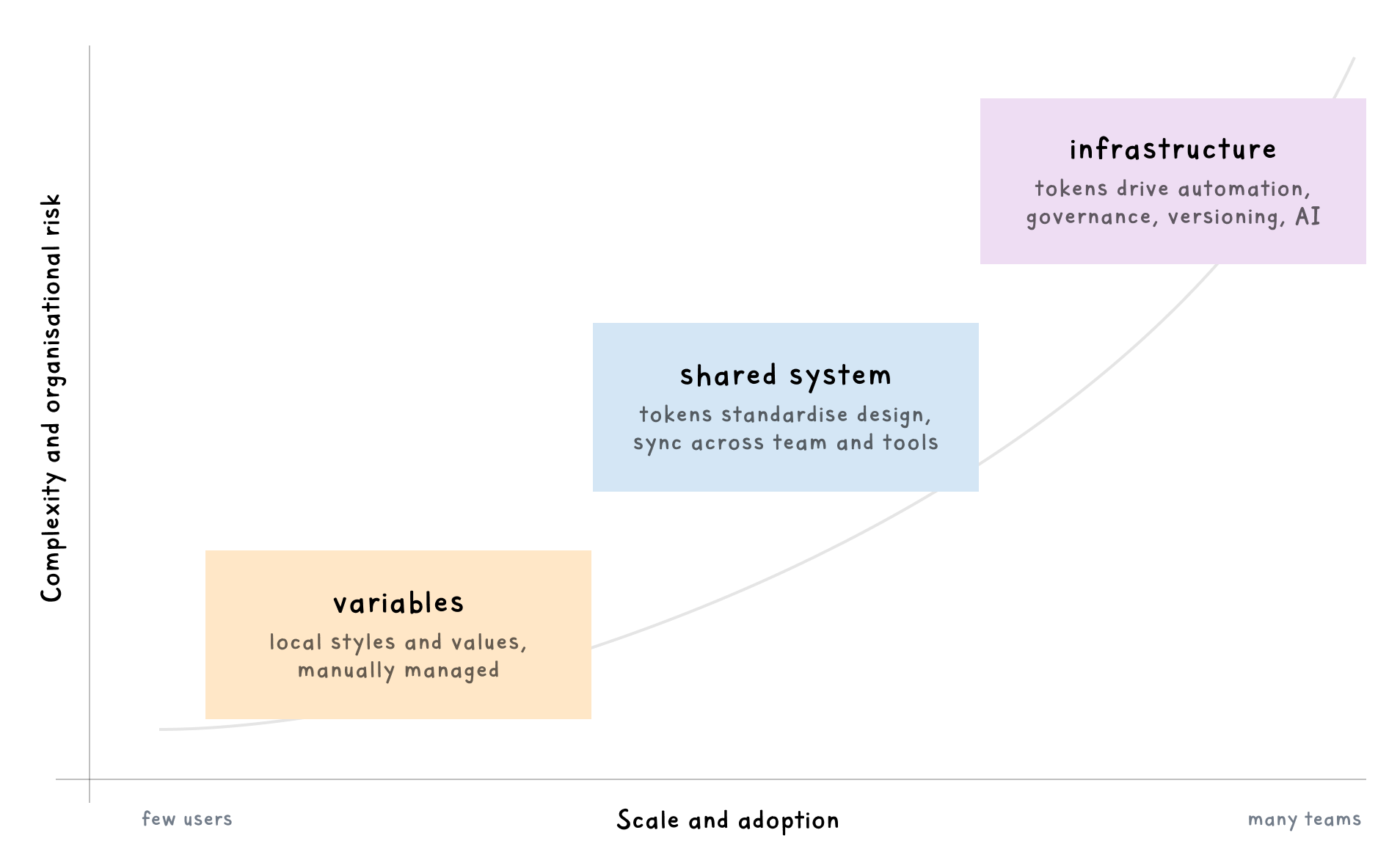

There’s a moment in every growing design system where tokens stop being helpful and start being critical. That moment defines your future stability.

Maybe it’s when three teams start using the same colour token for completely different purposes. Or when someone suggests changing a spacing value and everyone goes quiet, mentally calculating what might break.

What starts as a way to standardise colours and spacing becomes something bigger. Your tokens aren’t just design decisions anymore. They’re infrastructure.

The shift nobody prepared for

Most teams treat design tokens like enhanced style guides. A collection of colours, typography scales, and spacing values that keep things consistent. For a while, that works.

But systems grow. More products adopt your tokens. More teams develop opinions in their own contexts. As new platforms spin up – iOS, Android, web, internal tools – each one needs the system to keep pace. Engineering builds automation around it. And suddenly, changing a single token isn’t just a design decision – it’s a deployment that touches production systems across your organisation.

And that’s the threshold – your tokens have become infrastructure.

What makes tokens infrastructure

Infrastructure isn’t about what you create – it’s about what relies on it.

When multiple teams depend on your tokens, when coordination becomes part of every change, when breaking something means breaking production – that’s infrastructure.

Here’s what it looks like:

- Multiple consumers downstream – Your tokens feed products, platforms, and tools that expect them to be predictable.

- Versioning matters – You can’t update values and hope everything works. You need deprecation policies and migration paths.

- Breaking changes cost real time – A poorly planned token update triggers emergency fixes, delayed releases, and coordination overhead. Design tokens don’t fail because they’re wrong – they fail because they outgrow their governance.

- Someone needs to own it – Without clear governance, tokens drift. Decisions get made in isolation. Things break.

- Machines need to read it – Build pipelines, deployment systems, and AI tools rely on tokens being consistently structured.

If any of this sounds familiar, you’re probably seeing entropy – the small inconsistencies that multiply when structure can’t keep up. And once that starts, every change feels riskier than the one before it.

I wrote about this in a previous article:

The gap between design and infrastructure

The shift happens quietly. Your token system works brilliantly at 50 tokens. Then you hit 250. What worked for two products starts breaking down at five. There’s no versioning strategy, no process for breaking changes, and no clear ownership.

In a system I worked on last year, we hit this threshold when the team needed to add support for a third brand. What seemed like a simple token update cascaded into a three-month project, touching every product in the ecosystem. Each release came with that familiar tension – did we miss something?

We’d built tokens for consistency, not for evolution.

That project taught me about the cost of designing for stability instead of adaptability. It’s a mistake you only make once.

The gap between “design variables” and “infrastructure” is where the pain lives.

Treat tokens like APIs

The moment tokens touch production, you’re not designing colours anymore. You’re designing contracts.

And like any contract, tokens need to be explicit about what they promise, what happens when they change, and how consumers should adapt.

Versioning and documentation

Tokens get consumed programmatically, like API endpoints. Three practices separate systems that scale from systems that break.

1. Version everything

Use semantic versioning (v1.2.0) and make it visible. When someone references color.brand.primary, they should know which version they’re using – and what changes come with it.

A minor version bump (v1.1.0 to v1.2.0) might add new tokens. A major version (v1.0.0 to v2.0.0) signals breaking changes that need migration work.

The W3C Design Tokens Community Group is close to v1.0.0 of their specification – standardised formats that make this approach consistent across tools.

2. Document with contracts

Document what each token does, when to use it, what happens if it changes, what alternatives exist.

If button.background.primary is only for primary CTAs, say that. If changing spacing.base will affect 47 components, document it.

API endpoints have required parameters and expected responses. Tokens need the same level of clarity.

3. Plan for deprecation

You can’t support every token forever. Decide upfront how long deprecated tokens stick around (six months? a year?), what the migration path looks like, and how you’ll communicate changes.

Establish clear upgrade paths – marking tokens as deprecated, providing alternatives, and eventually removing them when the transition period ends.

Plan for deprecation and migration

Breaking changes will happen – a token rename for clarity, a colour system overhaul, a spacing scale that no longer fits mobile.

When this happens, treat it like a product launch. Announce the change early, explain the reasoning, provide clear migration steps that teams can follow without reverse-engineering your intent.

The practical approach: support both old and new tokens simultaneously during transition periods. Mark deprecated tokens clearly in your documentation. Set a sunset date and stick to it. Give teams three to six months to migrate – enough time to plan the work, not so much that it gets forgotten.

Document everything. What’s changing. Why it’s changing. What teams need to do. Which products are affected. Where to get help. The teams that migrate smoothly aren’t lucky – they’re informed.

The best migrations feel boring – no emergency Slack channels, no Friday-night deployments. Just steady, predictable progress. That’s how you know it’s working.

Automate before it breaks

Manual token updates don’t scale. Full stop.

At some point – usually around 100 tokens, definitely by 500 – manually updating token files across platforms becomes impossible to do reliably. You need automation.

Tools like Style Dictionary transform tokens from a single source into platform-specific formats automatically. Your design team updates one JSON file, and the build pipeline generates CSS variables for web, Swift code for iOS, XML for Android. One source of truth, multiple outputs.

Set up CI/CD pipelines that validate token changes before they ship. Automated tests catch breaking changes. Linting enforces naming conventions. Schema validation ensures structure stays consistent. Make it technically difficult to accidentally break things.

Governance keeps it together

Token decisions need ownership. Not consensus-by-committee, but clear accountability.

Whether that’s a dedicated steward, a rotating role, or a small working group – someone needs to own the decisions. Who approves new tokens? Who decides when to deprecate? Who communicates changes? Who resolves conflicts when two teams want different things?

Without clear governance, tokens accumulate. You end up with color.blue, color.blue-new, color.blue-final, and color.blue-actually-final because nobody had authority to consolidate them.

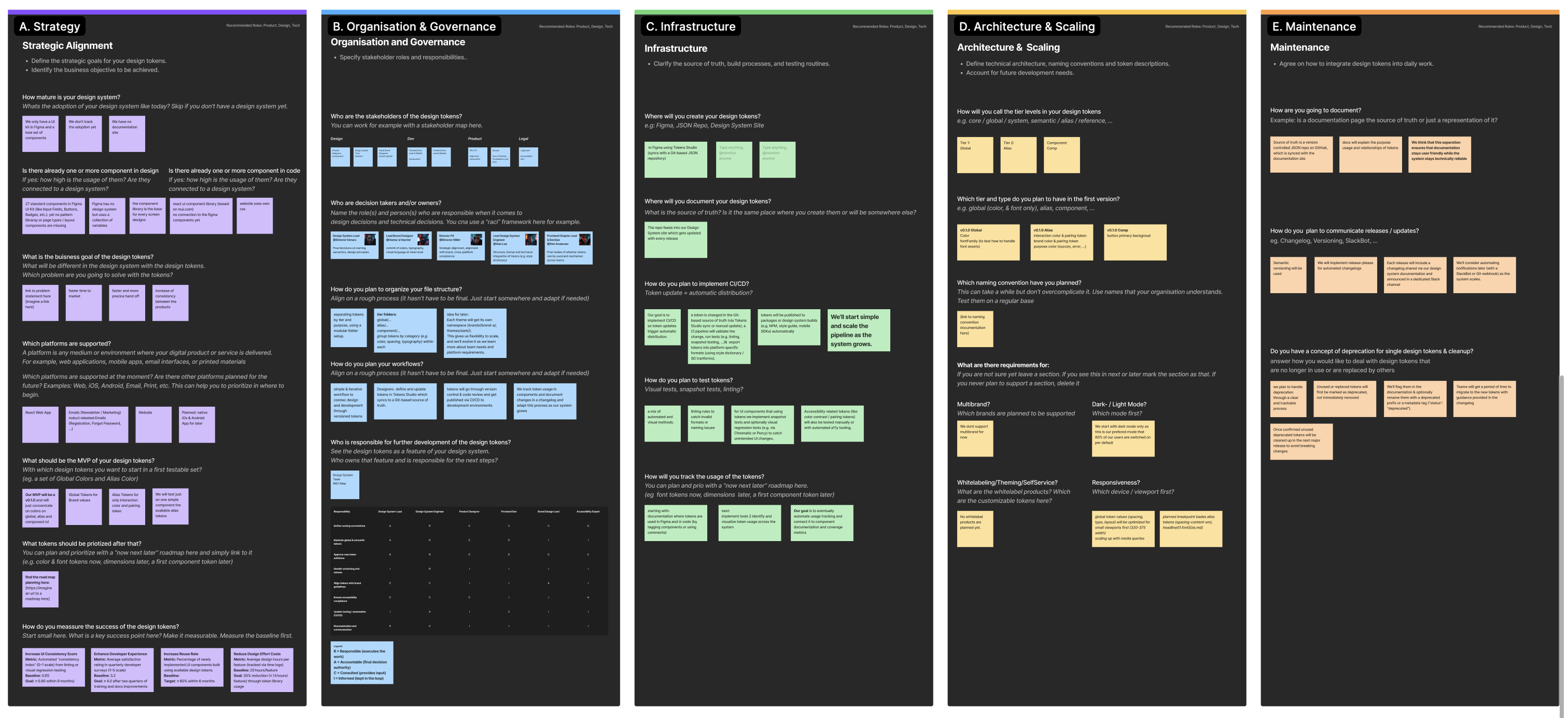

Philipp Jeroma’s Design Token Starter Canvas (presented at Into Design Systems 2025) gives you a practical framework to work through this. It covers strategy, governance, infrastructure, architecture, and maintenance. Not a checklist you complete once, but a workspace you return to as your system evolves and new questions emerge.

Determining what governance model is needed will depend heavily on your organisation – what works for a 10-person startup will differ from what works for a 10,000-person enterprise. But, regardless of size, every system needs someone who can make the call when decisions need making.

Is your design system ready for AI? AI agents are already consuming design systems. Find out if yours is structured to be understood by them.

Scaling across products and platforms

Multiple products, brands, themes, and modes simultaneously – this is where token architecture either proves itself or collapses.

Your system starts simple – one brand, one theme. Then come light and dark modes, secondary brands, white-label products, mobile apps, and accessibility variants. Each adds another layer of dependency.

The answer isn’t adding more tokens – it’s designing a better hierarchy.

Use tiered architectures. Separate foundational values (base colours and scales) from semantic tokens (how those values get applied) from component-specific tokens (what individual components use).

Structured properly, you swap an entire theme by changing a single reference layer. Structured poorly, you’re manually updating hundreds of values and praying nothing breaks.

The AI factor

As I wrote in Your next design system user is an agent, AI tools are becoming consumers of your token system. They read your tokens, interpret your naming, and generate code that carries those assumptions forward.

Well-structured token systems that leverage semantic naming and clear documentation will produce decent AI-generated code. While a more ambiguous token structure with inconsistent patterns will produce terrible output.

Clarity helps both machines and humans. When everyone – person or agent – can read your intent, your system starts working for you, not against you.

Where to start

Pick one painful area – versioning, migration paths, documentation – and start there.

Audit your existing tokens – are they organised logically? Do they have clear purposes? Can someone new understand what each token does?

Involve your team early – token architecture isn’t solo work. Get designers and developers together, run naming workshops, use real interface examples, not abstract planning.

Document decisions as you make them – why that naming convention? What trade-offs did you consider? Leave breadcrumbs.

Start small, but start now. Even a single validation script is infrastructure thinking that pays dividends later.

The mindset shift

Treating tokens as infrastructure means accepting they’re never done – they’re living systems that demand maintenance, governance, and thoughtful evolution.

It means learning to be okay with deprecating things, with breaking a few eggs now and then, with having difficult conversations about what should and shouldn’t be a token.

The goal isn’t perfection – it’s sustainability.

A token system that grows, adapts, and survives leadership changes is worth more than one that’s theoretically perfect, but practically unmaintainable.

Teams that succeed don’t have magical architectures – they have realistic ones. They’ve accepted the constraints, built systems that work within them, created processes to evolve over time.

So – are your tokens infrastructure yet?

The real question isn’t whether they should be. It’s whether they already are.

Multiple teams depend on them. Changing them requires coordination. Breaking them has real impact. They’re already infrastructure – whether you treat them that way or not.

The gap between what your tokens are and how you’re managing them is where the risk lives. Close that gap, and tokens stop being a source of anxiety. They become the reliable foundation they were meant to be.

That silence when someone suggests changing a token? It’s not fear – it’s recognition. You’ve crossed the line. The work now is making change safe again.

Thanks for reading! This article is also available on Medium, where I share more posts like this. If you’re active there, feel free to follow me for updates.

I’d love to stay connected – join the conversation on X, Bluesky, or connect with me on LinkedIn to talk design, digital products, and everything in between.

Member discussion